Prism and the Architecture of Artifact-Native AI for Science

Prism is a LaTeX-native, AI-first workspace for scientific writing. Under the hood, it’s a new pattern: the model is embedded in the artifact, not bolted onto the side. This post explains the architecture, the contracts that keep it honest, and what “artifact-native AI” changes for reliability and governance.

Axel Domingues

January’s trending topic wasn’t a new benchmark score.

It was a product shape.

OpenAI’s Prism landed as a free, LaTeX-native research workspace — and the interesting part isn’t “AI helps you write.” The interesting part is where the AI lives:

The model sits inside the paper, not beside it.

That changes everything.

A chat assistant treats your research like a prompt. An artifact-native system treats your research like a structured object: sections, citations, figures, compilation, review cycles, permissions, and provenance.

This post is the engineering lens:

- what “artifact-native AI” means,

- why Prism is a useful reference point,

- and the architecture contracts that prevent “helpful writing” from becoming unverifiable science.

If you use AI in scientific work, your non-negotiables don’t change:

- don’t fabricate data

- don’t fabricate citations

- don’t hide authorship

- don’t confuse fluency with evidence

The trend

Artifact-native AI: the model is embedded in a domain artifact (a paper) with structure, constraints, and provenance.

The shift

From “chat + copy/paste” to workspace + workflows: citations, compilation, collaboration, review, and publication prep in one place.

The risk

A writing accelerator can also accelerate bad science (fake citations, invented results, paper-mill throughput).

The thesis

Reliability comes from contracts: evidence boundaries, patch-based edits, provenance, and auditable pipelines.

Prism in one sentence

Prism is a web-based LaTeX workspace where AI is integrated into the authoring environment — intended to reduce the “tool soup” researchers juggle (editor, compiler, citation manager, notes, chat, collaborators).

That alone is useful.

But the deeper lesson is architectural:

- constraints (compile must pass)

- invariants (citations must resolve)

- provenance (what changed, by whom, when)

- and evaluation (did the system improve the artifact without lying)

That’s the pattern worth learning from.

What “artifact-native AI” means

Artifact-native AI is not “AI features inside a product.”

It’s a design where the primary unit of work is an artifact with structure and lifecycle:

- not a prompt

- not a conversation

- not “some text”

Examples of artifacts (and why they matter)

- scientific paper: sections, bibliography, figures, compilation rules

- codebase: AST, tests, lint, build, CI

- CAD model: geometry constraints, units, assemblies

- legal contract: clauses, references, jurisdiction rules, redlines

Artifacts come with native validity checks.

That is the leverage.

Chat-native

Context is a prompt. Output is text. Validation is external and manual.

Artifact-native

Context is a structured object. Output is a patch. Validation is part of the workflow.

Why this is a real architecture shift (not a UI tweak)

In 2023–2025, “LLM product architecture” matured around:

- retrieval + grounding

- tool use + sandboxes

- eval harnesses + rollouts

Prism pulls those patterns into a single artifact-centric runtime.

Instead of “ask chat, then paste,” the system can do things like:

- target a specific section

- propagate changes to references

- keep a bibliography consistent

- enforce compilation / formatting constraints

- preserve collaborator boundaries

- log what the model did

That’s why it’s trending: it feels like “a new IDE,” but for scientific writing.

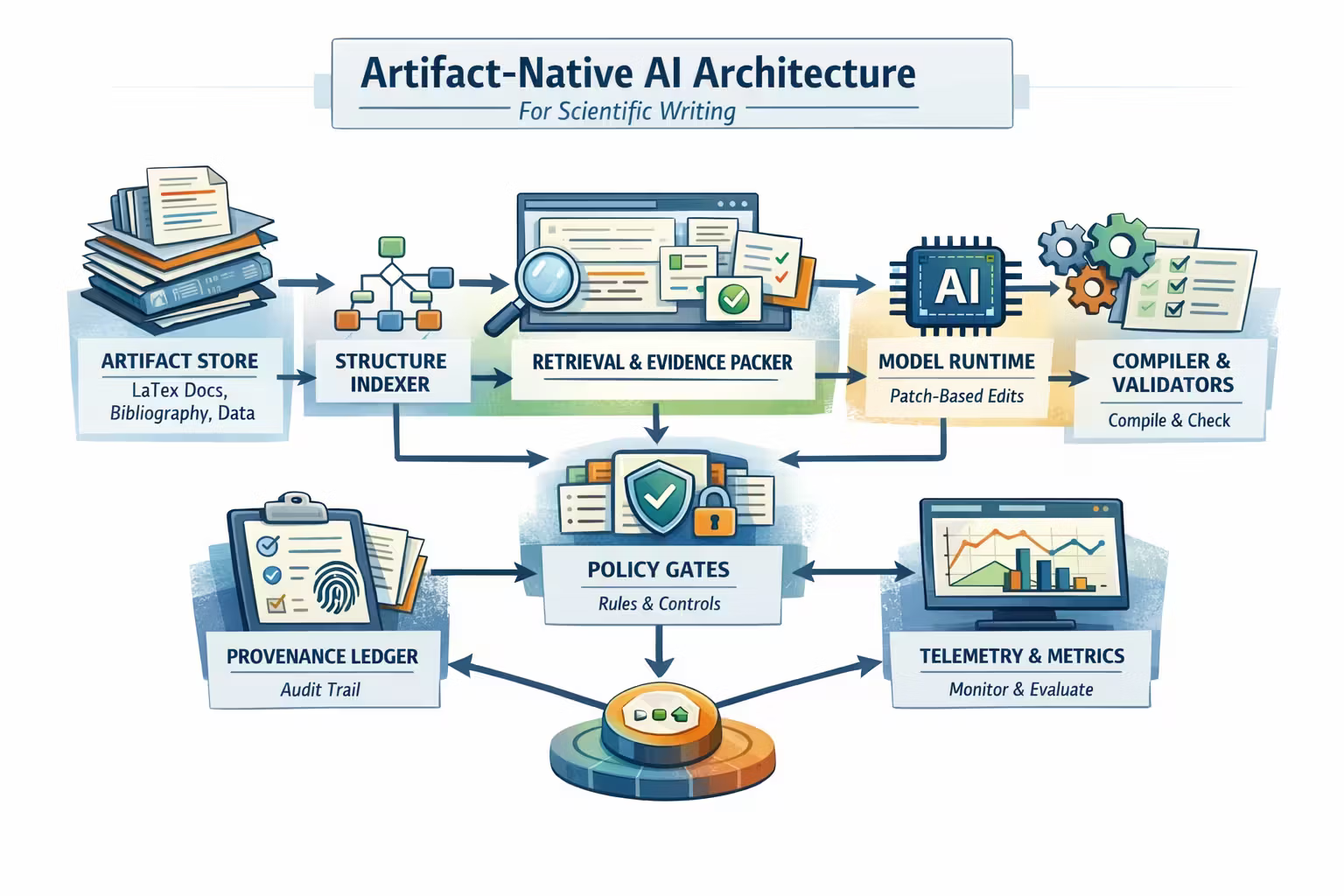

The architecture blueprint: Prism as a reference pattern

Let’s talk about the components you need to make artifact-native AI work without devolving into vibes.

At a high level, you’re building seven subsystems.

The paper is not “text.” It’s a project:

- LaTeX sources

- bibliography database

- figures and tables

- build config (packages, compilation)

- metadata (authors, affiliations, target venue)

Contract: everything the model edits must be representable as a versioned change in this store.

To act safely, the system needs structure:

- section tree (Introduction → Methods → …)

- citation graph (who cites what)

- figure/table references

- definitions used across sections

Contract: “edit Methods paragraph 2” must map to a stable region, not a fuzzy location.

Science writing is citation-heavy. “Sounding right” is not enough.

Retrieval can pull:

- paper library items

- notes and annotations

- prior work summaries

- your own lab’s internal docs (with ACLs)

Contract: the model can only cite from allowed sources, with stable IDs, and citations must resolve.

The model should not output a full paper blob. It should output structured edits:

- insert text at location X

- rephrase paragraph Y

- add citation Z to sentence S

- propose figure caption update

Contract: outputs must be parseable into patches and validated before merging.

LaTeX gives you a rare gift: a hard validity check.

Validators include:

- compilation success

- missing references / broken links

- bibliography resolution

- style rules (venue template constraints)

Contract: no merge without passing the validation suite.

Artifact-native systems need “who did what” as first-class data:

- human vs AI edits

- prompts/instructions (redacted as needed)

- sources used

- model version

- tool actions and results

Contract: you can reconstruct how a paragraph came to exist.

If you can’t measure it, you can’t trust it.

You need metrics like:

- citation validity rate

- compile pass rate after AI edits

- revert rate of AI edits

- reviewer-driven change rate (post-submission)

- time saved per workflow stage (editing, citations, formatting)

Contract: quality is measured on artifacts, not on “it felt helpful.”

This is the point:

It’s interesting because it forces a design where AI interacts with a structured project that can be validated, audited, and rolled back.

The core contract: treat model output as a patch, not content

If you learn one pattern from artifact-native AI, make it this:

The model proposes patches. The system decides what merges.

Patch-based design buys you:

- reviewability (diffs)

- rollback (revert commit)

- validation (compile, citation checks)

- governance (approvals)

Here’s what patch-shaped output looks like conceptually:

{

"intent": "revise_section",

"target": {"section_id": "methods", "range": "p2"},

"edits": [

{"op": "replace_text", "old_hash": "sha256:...", "new_text": "..."}

],

"citations": [

{"op": "add_citation", "cite_key": "Smith2022", "sentence_ref": "methods.p2.s3"}

],

"evidence": [

{"source_id": "library:paper:10.1234/abcd", "used_for": "claim_support"}

]

}

No magic required. Just system discipline.

- broken references

- invented citations

- inconsistent terminology

- and untraceable authorship

Truth boundaries for scientific writing

In 2023 I started framing LLM systems around truth boundaries: what must never be wrong.

For Prism-like systems, the truth boundary is not “all words must be correct.” That’s not achievable.

But you can define strict invariants.

Citation integrity

Every citation must resolve to an actual source. No invented papers. No fake DOIs.

Claim–evidence alignment

High-risk claims must be traceable to evidence chunks or explicit “author asserts” labels.

Data & methods honesty

No fabricated experiments, parameters, or results. If data isn’t present, it’s not “summarized.”

Provenance

You can answer: what was AI-generated, what was human-edited, and what sources were used.

This is how you keep “writing acceleration” from becoming “credibility erosion.”

“AI slop” is an architectural failure mode (not just a cultural one)

When people worry about paper mills and fake research, it’s tempting to treat it as “bad actors.”

But systems create incentives.

If your tool makes it easy to:

- generate plausible prose,

- attach plausible citations,

- and produce a PDF that compiles,

…then you have increased the throughput of low-integrity submissions.

So the right response is system-level:

- verified citations

- provenance

- anomaly detection in workflows

- rate limits on high-risk operations

- and “prove it” gates for claims that would otherwise be cheap to fabricate

If you don’t design it, it will still exist. It will just be invisible until a scandal forces it into existence.

How to design a “context assembler” for a paper (without stuffing everything)

Prism-like systems naturally push you away from “paste the entire internet into the prompt.”

A scientific artifact gives you structure — use it.

A good context packet has separation-of-concerns:

- Policy (what the model is allowed to do)

- Task (the edit request)

- State (section text + local definitions)

- Evidence (retrieved sources, capped)

- Constraints (patch schema + validation rules)

Here’s the part that matters: budgets.

Pick a small working set

Give the model:

- the target section

- neighboring paragraphs for coherence

- local glossary/definitions extracted from the paper

Retrieve evidence, don’t paste libraries

Pull top-k sources relevant to the edit (and only those). If you can’t justify why a document is present, it shouldn’t be in the prompt.

Cap and segment untrusted content

Treat retrieved text as data. Never let retrieved text outrank policy.

Emit a loggable “context packet”

Record:

- section IDs included

- evidence IDs included

- token counts

- model version

- patch validation outcomes

This is “artifact-native” in practice: structure lets you keep context small and targeted.

Reliability checklist: what to validate before merge

Prism gets credibility if it makes correctness cheaper than nonsense.

So every AI-generated patch should trigger a cheap validation suite.

- Compilation passes (or at least static checks on references)

- No missing citations / broken cite keys

- No new citations unless they resolve to a real source record

- No figure/table references added without corresponding artifacts

- Terminology consistency check (key terms don’t flip mid-document)

- “High-risk claims” require evidence links or explicit author-assert flags

- Compile pass rate after AI edits

- Revert rate of AI patches (by category)

- Citation validation failures (by source type)

- Evidence usage rate (did answers actually use retrieved evidence?)

- Latency and token cost per patch operation

- Spike in citation failures → lock citation insertion behind human review

- Spike in compile failures → restrict to rewrite-only operations

- Spike in revert rate → downgrade model or tighten patch constraints

- Suspicious throughput patterns → rate limit projects / accounts

This is how “AI in science” becomes operable instead of controversial.

If you’re building Prism-like systems: start here

You don’t need a giant platform to adopt the artifact-native pattern. You need the right sequence of constraints.

1) Make the artifact addressable

Add stable IDs to sections, paragraphs, figures, and citations.

2) Force patch-shaped outputs

Reject “freeform rewrite the whole paper” as a default mode.

3) Install mechanical validators

Compile checks, cite resolution, reference integrity, style rules.

4) Add provenance before you need it

Log model version, evidence sources, patch diffs, and approvals.

5) Build evals on artifact outcomes

Measure compile pass, citation validity, revert rate — not user vibes.

January takeaway

Prism is a signal that the next wave of AI products won’t be “chat in a box.”

It will be AI embedded into structured artifacts — where reliability is earned through validation, provenance, and governance contracts.

Resources

OpenAI — Introducing Prism

The official product framing: Prism as a free, AI-native workspace for scientific writing and collaboration.

FAQ

Not if it’s done correctly.

Artifact-native AI is less about rewriting sentences and more about:

- section-scoped edits

- citation-aware changes

- compilation-safe patches

- provenance and review workflows

The value isn’t “better prose.” It’s fewer broken artifacts and more auditable edits.

No.

But it changes where hallucinations can hide.

If the system requires:

- citations to resolve

- claims to link to evidence

- patches to compile

…then a large class of “confident nonsense” becomes catchable before merge.

Treating it as “chat, but embedded.”

If you don’t enforce patch outputs, validation, and provenance, you’ll get:

- higher throughput of low-integrity content

- harder debugging

- and security/integrity incidents you can’t audit.

The artifact must remain the source of truth.

Anything irreversible or reputation-critical:

- submission actions

- authorship declarations

- claims of new results

- final wording in Methods/Results that could be interpreted as fabricated

The model can propose. Humans should sign.

OpenClaw: A Viral Agent, a Skills Ecosystem, and the Supply-Chain Reality Check

OpenClaw went from “personal AI assistant” to “security headline” in weeks. This post explains what OpenClaw actually is (agent runtime + gateway + skills), why skills are a software supply chain, and the architecture patterns that make a self-hosted agent survivable.

Reference Architecture v2: the Operable Agent Platform

This is the 2025 finale: a practical reference architecture for running fleets of agents with governance—connectors you can trust, traces you can debug, evals you can ship, and humans you can hand off to.