OpenClaw: A Viral Agent, a Skills Ecosystem, and the Supply-Chain Reality Check

OpenClaw went from “personal AI assistant” to “security headline” in weeks. This post explains what OpenClaw actually is (agent runtime + gateway + skills), why skills are a software supply chain, and the architecture patterns that make a self-hosted agent survivable.

Axel Domingues

February’s trending topic wasn’t “agents” in the abstract.

It was OpenClaw in the wild.

A self-hosted, open-source “personal AI assistant” that can:

- talk to your apps

- run scheduled tasks

- orchestrate tools

- and load community “skills”

…and then immediately triggered the most predictable second-order effect in software:

An ecosystem appeared. And attackers joined on day one.

This article is expert teaching, not a hype tour:

- what OpenClaw is (architecturally),

- what “skills” really mean (security-wise),

- and how to run an agent runtime like a grown-up system instead of a demo.

- your files

- your browser session

- your tokens

- your email

- your terminal

Treat it like production software, even if it’s “just on your laptop.”

What OpenClaw represents

An agent runtime + gateway + skills ecosystem that runs locally and touches real accounts and real state.

The February lesson

“Skills” are not prompts. They are packages: code + instructions + dependencies + trust decisions.

The failure mode

Agent ecosystems are supply-chain ecosystems. A public registry becomes an attack surface immediately.

The design goal

Make agent execution auditable, least-privileged, sandboxed, and reversible.

What OpenClaw actually is (in architecture terms)

People describe OpenClaw as “a personal AI assistant.”

That’s the marketing wrapper.

The more accurate description is:

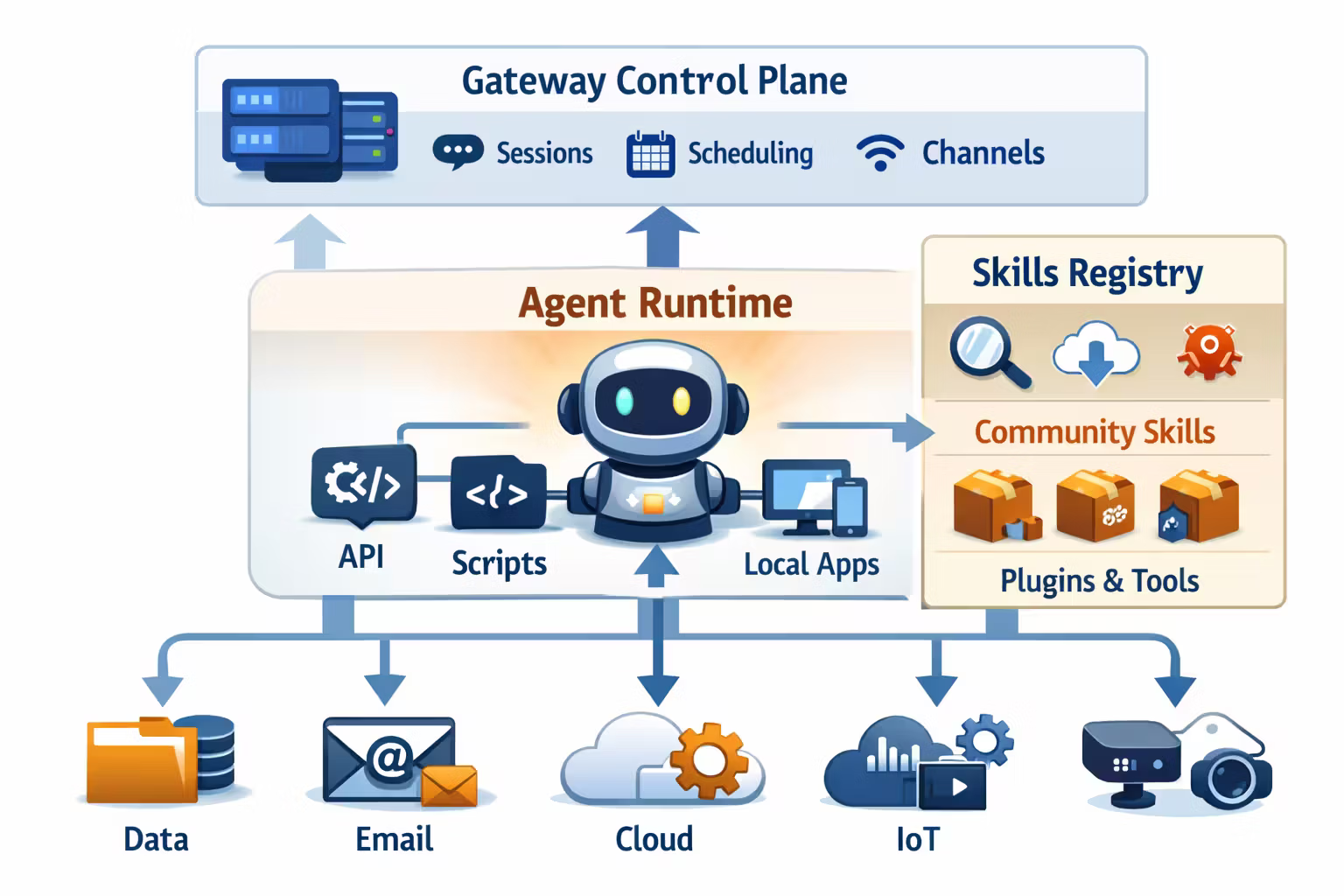

OpenClaw is a self-hosted agent platform with a control plane (gateway), an agent runtime, and a plugin system (“skills”).

Think of it like a lightweight, local-first automation platform:

- a runtime that can call tools and stream results

- a control surface (sessions, scheduling, channels)

- a marketplace/registry where “capabilities” are shared

That structure is why it went viral: it feels like “an assistant,” but behaves like “a platform.”

Why the skills model changes everything

In LLM land, we got used to “prompt injection” as a risk.

OpenClaw shifts the risk to something more classic:

dependency injection.

A typical OpenClaw workflow looks like:

- you ask for a task (“connect to my email”, “post to Slack”, “sync calendar”)

- the agent selects or suggests a skill

- the skill tells the runtime how to act, what tools to use, and what dependencies it needs

- you approve something (explicitly or implicitly)

- your machine executes it with your identity

That’s not “AI text.” That’s program execution, with an LLM in the control loop.

If you don’t treat skills like software packages, you will get supply-chain’d.Installing a skill is basically installing privileged code.

The supply-chain twist: “instruction files” become an execution vector

One of the most interesting OpenClaw-specific lessons is that attackers don’t need to hide malware in compiled binaries.

They can hide it in the human-in-the-loop workflow.

A malicious skill can:

- look legitimate in a registry

- read as “reasonable setup steps”

- and then steer the user into executing a harmful installer or dependency

The new trick is psychological:

- users trust their agent

- agents present steps with confidence

- humans copy/paste “just to make it work”

This is not a new class of security problem.

It’s classic social engineering — amplified by:

- agent authority (“the assistant told me to do it”)

- automated discovery (“install this to proceed”)

- and the speed of viral ecosystems

Before the first incident.Not after the first incident.

The OpenClaw threat model (practical, not paranoid)

Here’s the threat model I’d use if I had to defend an OpenClaw deployment.

What happens: a skill looks useful (email, wallet, scraping, integrations), but contains:

- harmful setup steps

- hidden exfiltration behavior

- dependency chains that pull malware

Why it’s plausible: registries are open by default; review doesn’t scale.

Primary defense: allowlists + signature verification + scanning + quarantine.

What happens: the agent presents steps that feel official:

- “install this prerequisite”

- “run this bootstrap”

- “paste this into your terminal”

Primary defense: policy gates + “dangerous action” UX + sandboxed execution (no access to real secrets).

What happens: if the runtime can read:

- browser profiles

- shell history

- environment variables

- token files

…then a single malicious tool call can exfiltrate everything.

Primary defense: identity isolation + dedicated credentials + file-system scoping + network egress controls.

What happens: people wire OpenClaw into bot tooling to do things websites explicitly disallow (scraping, bypassing protections, credential stuffing, etc.).

Primary defense: don’t ship “bypass” capabilities; enforce tool scopes; log and rate-limit risky operations.

The architecture pattern that makes OpenClaw survivable

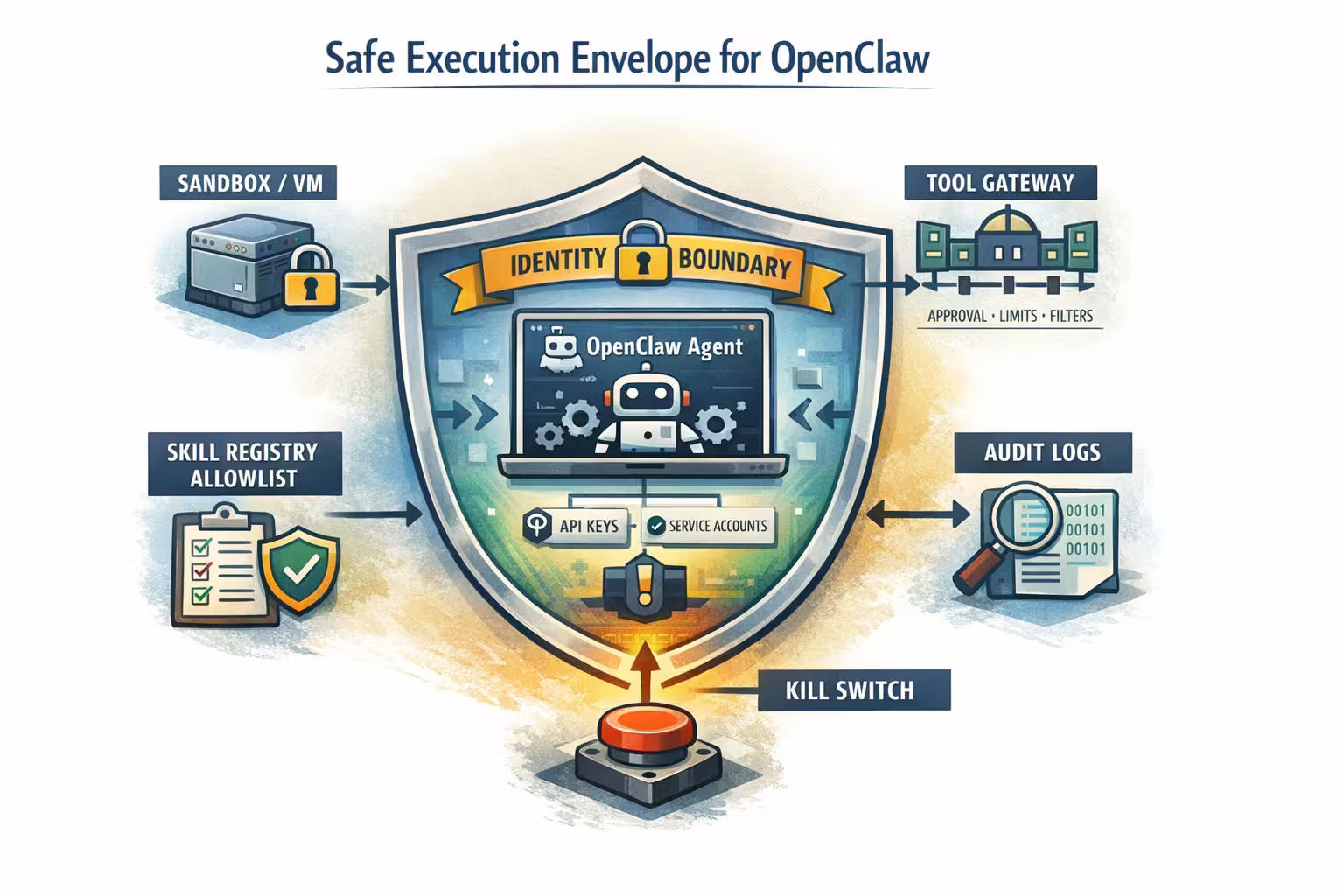

You don’t “secure an agent.”

You secure an execution environment.

Here is the pattern I recommend for any self-hosted agent runtime — and OpenClaw is a perfect case study.

1) Isolation: run the agent in a box

The rule is simple:

The agent runtime should not live on the same machine identity as your life.

Practical options:

- a dedicated OS user with minimal permissions

- a VM with no personal files mounted

- a container with strict volume mounts and network policies

Isolation isn’t a nice-to-have. It’s the only meaningful blast-radius control.

2) Identity: dedicated credentials only

Treat the agent as a service.

- separate API keys

- separate OAuth tokens

- separate accounts where possible (especially for business use)

No “borrow my real browser session.” That’s how “assistant” becomes “account takeover.”

3) Tool gateway: capability boundaries, not vibes

OpenClaw will call tools.

So put an explicit gateway in front of tools:

- allowlists

- scopes

- per-tool budgets

- human gates for high-risk actions (send money, publish, delete, deploy)

Your model is not the boundary. Your gateway is.

4) Skill governance: treat the registry like npm, not a prompt library

A good skills pipeline looks like package management:

- fetch skill

- verify signature (or at least integrity hash)

- static scan (content + dependency manifest)

- policy check (requested capabilities)

- quarantine unknown skills

- run in sandbox

- monitor runtime behavior

- keep an audit trail

A concrete “skills contract” that scales beyond hobby usage

The biggest missing piece in most viral ecosystems is a capability contract.

So here’s a simple one you can apply even if the upstream project doesn’t enforce it yet.

Every skill must declare:

- what it can access (filesystem, network, apps)

- what it can write (and where)

- what external domains it can call

- what credentials it needs (and how they’re scoped)

- whether it can spawn processes

- whether it can run shell commands

And the runtime should enforce:

- deny by default

- explicit grant per skill

- runtime auditing

- easy revoke

The skill manifest

A machine-readable capability declaration (think “mini-permissions model”).

The policy engine

A deterministic gate that decides: allow, deny, require approval, or sandbox-only.

The deployment playbook (home vs work)

Because OpenClaw is self-hosted, people will deploy it in two very different modes. The safety posture should differ.

Home / tinkering mode (acceptable risk, still disciplined)

- run in a separate OS user or VM

- don’t mount your main home directory

- don’t reuse your main browser profile

- install skills from a curated list only

- log everything (even locally)

Work / team mode (treat it like production)

- isolated runtime (VM/container) with network policies

- dedicated service accounts + OAuth apps

- private skill registry / allowlist

- mandatory code review for internal skills

- approval gates for high-risk actions

- retention policies for logs (privacy-aware, audit-ready)

- isolation

- dedicated identities

- and a skill allowlist

You deployed an ungoverned automation platform with unclear accountability.

What to measure (so you can improve it)

If you can’t measure skills, you can’t govern skills.

A minimal dashboard for an OpenClaw deployment:

- Skill install events (who installed what, from where, with what checksum)

- Capability grants (which skills have filesystem/network/process access)

- High-risk tool calls (send/email/publish/delete/deploy) + approval rate

- Egress domains contacted by skills (and drift over time)

- Secrets access attempts (expected vs suspicious)

- Rollback actions (skill quarantines, revocations, runtime resets)

If it’s a subsystem, it needs telemetry.

The broader February takeaway

OpenClaw is not “good” or “bad.”

It’s inevitable.

When agent runtimes become easy to run locally, ecosystems will form. Registries will emerge. People will share “skills.” Attackers will publish “skills.”

So the core lesson is architectural:

February takeaway

OpenClaw is a reminder that the moment an agent can install capabilities, you are operating a software supply chain.

Safety doesn’t come from better prompts. It comes from isolation, identity boundaries, policy gates, and auditable skill governance.

Resources

OpenClaw — GitHub repo

Project overview, architecture notes, and docs links (gateway, runtime, sessions, skills).

OpenClaw — “Introducing OpenClaw”

Project announcement and rebrand context (Clawdbot → Moltbot → OpenClaw).

Microsoft Security Blog — Running OpenClaw safely

Clear security framing: skills are privileged, isolate environments, avoid mixing with non-dedicated credentials.

Snyk — Malicious ClawHub skill case study

A concrete example of “skill-as-social-engineering”: instruction files guiding humans into unsafe installs.

FAQ

Functionally, it behaves more like a local agent platform:

- runtime that executes tool calls

- session/control plane concepts

- onboarding flows and companion tooling

- a skills ecosystem and a public registry

Once you have a registry, you have a supply chain. That’s the defining shift.

No.

A lot of the risk is not “a binary contains a virus.” It’s workflow manipulation:

- convincing instructions

- dependency chains

- humans copying commands

- credentials with too much scope

You need scanning and isolation and policy gates and audit trails.

Run it in a dedicated environment with zero personal secrets:

- separate OS user or VM

- no mounts of sensitive directories

- dedicated accounts/tokens if you connect services

- install skills only from a curated allowlist

Treat it like you’re evaluating a new automation platform — because you are.

Mixing identities.

The fast path is:

- “let it use my browser profile”

- “let it read my files”

- “let it access my Slack”

That turns experimentation into compromise potential instantly.

Separate identities and isolate first. Convenience can come later.

Agentic AI Is Becoming a Cybersecurity Problem

Once agents can take actions, they become non-human identities with privileges — and that turns “AI productivity” into “security surface.” This post explains the new threat model, why “kill switches” are really identity and execution controls, and the architecture patterns that make agentic systems operable.

Prism and the Architecture of Artifact-Native AI for Science

Prism is a LaTeX-native, AI-first workspace for scientific writing. Under the hood, it’s a new pattern: the model is embedded in the artifact, not bolted onto the side. This post explains the architecture, the contracts that keep it honest, and what “artifact-native AI” changes for reliability and governance.