The Model Router Era

Weekly frontier releases turned “pick a model” into an operational anti-pattern. In April 2026, routing became the real product: choose models per request, enforce eval gates, and budget cost/latency/security like an SRE system.

Axel Domingues

April’s hot topic wasn’t “a new model is better.”

It was the operational consequence of too many good models arriving too fast.

When releases land weekly (and pricing, latency, tool use, and safety behavior shift each time), the old decision:

“Which model does our product use?”

…stops making sense.

The new decision is runtime:

“Which model should handle this request, under this budget, with this security posture?”

That’s the model router era.

You’re not buying a model. You’re operating a policy-controlled inference fabric.

This month’s teaching goal is simple:

- what a router really is (and isn’t),

- how to gate model upgrades with evals,

- and how to turn cost + security into first-class routing constraints.

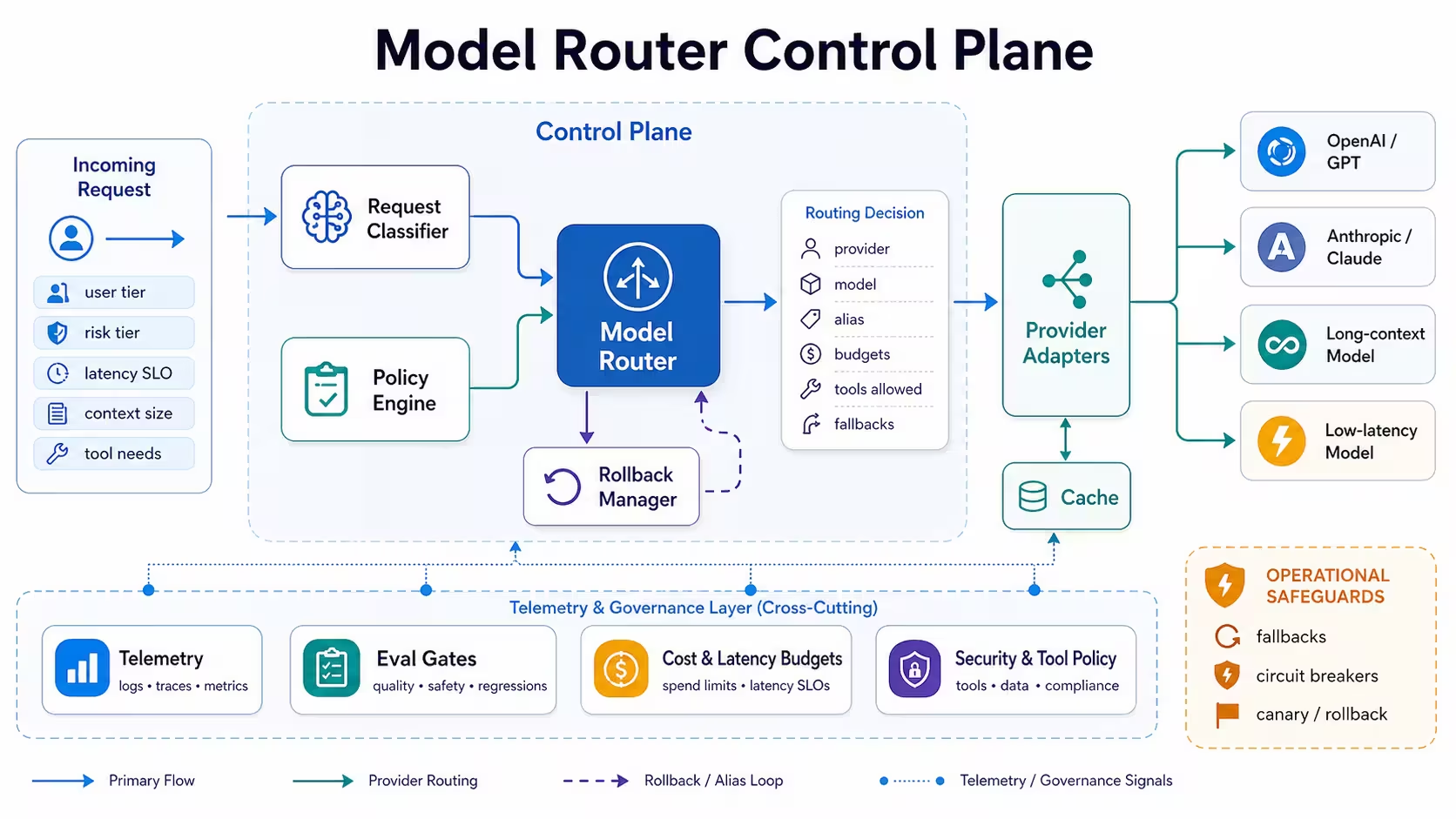

A router is a control plane:

- it selects a provider/model/version

- under budgets and policies

- and it records decisions so you can debug outcomes.

Why now

Frontier releases are frequent enough that “one model everywhere” becomes fragile, expensive, and hard to operate.

The real product

The router: policy + budgets + eval gates + fallbacks — the thing you can defend in an incident review.

The engineering job

Build a routing pipeline that is measurable, reversible, and safe under failures and drift.

The trap

Routing without governance becomes “randomness at scale”: inconsistent behavior, surprise bills, and untraceable failures.

The world that created routers

Three forces converged:

- Capability is now multi-dimensional

- one model is best at code

- another is best at reasoning-style tasks

- another is best at low-latency chat

- another is best at long context

- Cost is no longer a rounding error

- bigger contexts

- longer outputs

- tool calls

- retries

- Security posture varies by request

- what data is in the prompt?

- what tools will be invoked?

- what environment is allowed?

- what audit requirements apply?

So the router becomes the only stable abstraction:

turn “model choice” into a deterministic policy decision you can observe and roll back.

What a model router is (and what it isn’t)

A router is a component that returns a routing decision.

Not “the answer.” Not “a prompt.” A decision.

At minimum, it chooses:

- provider

- model

- version (or alias)

- tool permissions

- budgets (token cap, time cap, tool cap)

- safety mode (and escalation rules)

Router output

A small structured object describing where to run and under what constraints.

Router input

Request metadata: user tier, risk class, latency SLO, context size, tool needs, compliance constraints.

A concrete decision object (conceptual)

{

"route": {

"provider": "openai",

"model": "gpt-5.5",

"alias": "prod-stable",

"region": "eu"

},

"budgets": {

"max_input_tokens": 12000,

"max_output_tokens": 900,

"max_tool_calls": 5,

"deadline_ms": 9000

},

"policy": {

"risk_tier": "medium",

"tools_allowed": ["search", "kb_retrieve", "github_read"],

"requires_approval_for": ["email_send", "deploy"]

},

"fallbacks": [

{"provider": "openai", "model": "gpt-5.4", "when": "timeout_or_5xx"},

{"provider": "anthropic", "model": "claude-sonnet", "when": "rate_limit"}

]

}

That’s what you operate.

Router patterns that actually work

Most teams try “learned routing” immediately.

In practice, the first 80% of value comes from boring patterns.

Use explicit rules for:

- compliance (data residency, vendor allowlists)

- user tier (free vs paid)

- latency class (interactive vs batch)

- tool family (code, search, internal ops)

- prompt size bands

You can add a learned router later, but rules are debuggable and audit-friendly.

Start with a cheaper model and escalate only when needed.

Example triggers:

- low confidence / low score

- schema validation fails

- tool plan is incomplete

- user asks for “more depth”

Cascades reduce cost without sacrificing peak quality.

Route by artifact type:

- “code patch” lane (tool use + repo read-only)

- “policy answer” lane (retrieval + strict citations)

- “creative” lane (lower factual constraints)

- “support triage” lane (short outputs, strict structure)

This reduces cross-contamination of behaviors and makes evals clearer.

A learned router is valuable once you have:

- outcome labels (success/fail)

- cost labels (tokens, tool calls)

- latency labels (p50/p95)

- safety labels (refusal, policy violations)

Without telemetry, learned routing becomes learned guessing.

The missing piece: eval gates (routing without evals is gambling)

Routing sounds like flexibility.

But flexibility without gates is how you ship regressions quickly.

A model router needs two evaluation loops:

- Offline evals (before rollout)

- Online guardrails (during rollout)

Offline evals

A curated suite that matches your tasks and failure modes (not generic benchmarks).

Online guardrails

Canaries, shadow traffic, and rollback triggers based on real metrics.

What to measure (practical, not academic)

Offline:

- task success rate (by task family)

- schema validity rate (structured outputs)

- citation validity rate (if you cite)

- tool-use correctness (did it call the right tools, safely?)

- refusal/over-refusal rate (policy behavior)

Online:

- p95 latency per route

- cost per request (tokens + tool calls + retries)

- fallback rate (how often did we fail over?)

- incident signals (timeouts, 5xx, rate limits)

- “human override” rate (edits, re-asks, escalations)

- new failure modes

- new refusal behaviors

- new tool-call patterns

- new cost profiles

Budgets are the router’s real language

People talk about routing like it’s “quality optimization.”

In production, routing is budget management:

- latency budget (interactive vs batch)

- token budget (input + output caps)

- tool budget (number and type of side effects)

- risk budget (what actions are allowed without humans)

The budget stack (the thing you enforce)

Step 1 — Define latency classes

- interactive: tight deadline, limited tool calls

- background: slower, cheaper, more retries allowed

- batch: large payloads, discounted tiers, strict caps

Step 2 — Cap output like you mean it

Unbounded output is an invoice generator. Cap output per route and use “continue” only when necessary.

Step 3 — Put a ceiling on tool calls

Tool use is side effects + cost. Limit calls and require explicit escalation for risky tools.

Step 4 — Make budgets visible in logs

Every response should be traceable to:

- route chosen

- budgets enforced

- fallbacks taken

Budgets don’t reduce quality. They make quality reproducible.

Security routing: treat the prompt as sensitive input

By April 2026, “model routing” is also “security routing.”

Because not all requests are equal:

- some contain internal IP

- some contain regulated data

- some trigger privileged tools

- some come from untrusted sources (web/email/doc uploads)

So you need policy-driven routing based on data class and action class.

Data classification

Route based on what’s in the prompt: public, internal, confidential, regulated.

Tool scopes

Route based on actions: read-only vs write, human approval required, sandbox-only.

The simplest safe policy

- Confidential data → restricted vendor allowlist + strict logging + no external tools

- Untrusted docs → retrieval sandbox + tool gateway + injection-resistant prompt layout

- Write actions → require explicit approval state or step-up confirmation

- Payments / deploy / delete → human gate, always

The implementation blueprint (router as control plane)

Here’s the architecture spine that scales without turning into chaos.

Key components:

- Request classifier

- extracts features: prompt size, tool needs, risk tier, user tier, latency class

- Policy engine

- allowlists, residency constraints, tool scopes, budgets

- Router

- selects route + fallbacks, emits decision object

- Provider adapters

- normalize APIs across vendors, handle retries/timeouts, enforce caps

- Telemetry

- logs route decisions, costs, latencies, fallbacks, validation outcomes

- Rollback manager

- flips aliases (“prod-stable”) back to prior versions instantly

This is how you make “many models” feel like one operable system.

Failure modes (what breaks first)

Routing introduces new classes of bugs.

Here are the ones I’d expect to see in the first month.

Symptom: identical requests get different answers depending on route chosen. Fix: define task lanes, reduce randomness, pin routes for certain workflows, and add consistency evals.

Symptom: token usage creeps up as models change verbosity or tool behavior. Fix: enforce output caps, measure token burn by route, and add cost regression tests.

Symptom: a new model calls tools more often or in unsafe sequences. Fix: tool gateway + tool-use eval suite + per-route tool budgets.

Symptom: provider degradation triggers widespread fallbacks and multiplies load. Fix: budgets + circuit breakers + controlled degrade modes (cheaper routes, fewer tools).

Symptom: the model starts refusing too much or complying too much after an upgrade. Fix: policy behavior evals and route pinning for high-risk workflows.

April takeaway

The frontier is moving too fast for static decisions.

So the stable posture for 2026 is:

April takeaway

Stop selecting “a model.”

Build and operate a model router:

- routes are policy decisions

- evals are release gates

- budgets are the contract

- rollbacks are mandatory

Resources

GPT-5.5 release coverage (The Verge)

A clear summary of why newer frontier releases increasingly emphasize tool use, efficiency, and “real work” positioning.

DeepSeek V4 on Huawei Ascend (Reuters)

A reminder that model availability, hardware optimization, and geopolitical supply chains shape what “routing” can even mean.

Copilot auto model selection (GitHub Changelog)

A mainstream signal: “auto routing” is becoming a user-facing product feature, not just an infra trick.

GPT-5.5 in Copilot model picker (GitHub Changelog)

Routing is now explicit: products are shipping model selection as an operational control surface.

FAQ

Often, yes.

Even within one vendor you still have:

- multiple model tiers

- different latency/cost profiles

- tool-use differences

- version rollouts

Routing is how you make those differences explicit and operable.

A rules-based classifier + policy engine that selects between:

- a cheap default model

- a “hard problems” model

- a long-context model

- and a safe fallback

With logs, budgets, and a rollback alias.

Routing without eval gates.

They treat routing as “flexibility” and forget it’s also “risk surface.” If you can’t measure per-route outcomes, you can’t trust the router.

Yes — after telemetry exists.

Learned routers can optimize cost/quality well, but only if you have labels and stable measurements. Start with rules, then learn.